Gain proven strategies and best practices for platform owners, architects, developers, CIOs, release managers, and QA leaders.

AI Code Governance

AI-Native Platforms

Security & Compliance

Before you promote a Lovable app to production, it needs a code review. Not a casual glance — a structured Lovable health check that catches the security gaps, architectural debt, and compliance failures that the AI won't tell you about.

The rise of "vibe-coding" via platforms like Lovable or Replit has fundamentally changed the speed at which business units can innovate. What used to take a specialised engineering team six months can now be prompted into existence by a savvy business user in a single afternoon. However, for IT and Security teams, this velocity introduces a significant governance gap — and closing it requires a dedicated layer of AI code governance.

Quality Clouds provides the AI code governance layer that sits between your Lovable developers and your production environment — ensuring that what gets built fast also gets built right.

What Is a Lovable Health Check?

A Lovable health check is a structured review of an AI-generated application — covering security posture, code quality, dependency management, and GDPR compliance — before it is promoted to a production environment. It is the applied practice of AI code governance: ensuring that code generated at speed meets the standards required for enterprise use.

Unlike a penetration test, which probes live endpoints, a health check performs static analysis of the source code to identify risks that would never surface in a sandbox.

A complete Lovable health check covers four layers:

Security: RLS policy coverage, authentication guard logic, hardcoded secrets, PII handling

Code Quality: component size, React Hook hygiene, dependency pinning, "context rot"

Compliance: GDPR, encryption at rest, data retention rules

Performance: Supabase query efficiency, infinite recursion patterns, memory leaks

The Problem: Common Pitfalls in "Vibe-Coded" Apps

The most dangerous aspect of AI-generated code is its "confident" failure. Lovable apps typically use Supabase as a backend, and while the AI is excellent at creating functional UI, it consistently overlooks the invisible security and architectural layers required for enterprise use.

As highlighted in the ML6 report, "The Anatomy of a Lovable App," these projects often follow a "2-tier architecture" where the browser talks directly to the database. This simplicity is powerful, but it places the entire security burden on Row Level Security (RLS) policies — rules that are often generated by the LLM itself.

Through hundreds of audits, we consistently see the following issues:

The RLS Bypass (The "Open Door" Policy): AI often forgets to enable or correctly configure RLS. Without these policies, any user who can see your public Supabase URL can theoretically query your entire database.

Reversed Auth Guards: A common AI "hallucination" involves backwards logic — where the application accidentally blocks logged-in admins but allows anonymous guest access to sensitive routes.

Hardcoded Secrets: Business users often "chat" their API keys directly into the code. If a Secret key is included instead of a publishable key, your entire backend is compromised.

Architectural Debt & "Context Rot": Each AI change is optimised for the immediate request rather than holistic planning. This leads to oversized component files and deeply nested JSX that eventually hits a "complexity ceiling".

The Wake-Up Call: CVE-2025-48757

In early 2025, the industry was rocked by CVE-2025-48757, a critical vulnerability (CVSS 9.3) specifically targeting applications built with rapid AI generators. The vulnerability allowed remote, unauthenticated attackers to read and write to arbitrary database tables because of default insufficient RLS policies.

CVE-2025-48757 — At a Glance CVSS Score: 9.3 (Critical) Attack vector: Remote, unauthenticated Root cause: Default insufficient RLS policies in AI-generated Supabase backends Apps affected: 170+ Lovable applications Discovery method: External endpoint probing (pentest-style) |

The 360° Review: Why Pentesting Alone Isn't Enough

Many IT teams believe a standard Penetration Test (Pentest) is the final hurdle. While a pentest (like those provided by Aikido) is essential for identifying "outside-in" exploits like privilege escalation, it is only one half of the puzzle. CVE-2025-48757 was discovered specifically through probing live endpoints.

However, to ensure an app is truly production-ready, you need an "inside-out" Lovable code review. A pentest won't tell you that your app has unpinned dependencies that will break next week, or that your React Hook dependencies are causing a memory leak that will crash the browser for 20% of your users.

Quality Clouds fills this gap by performing deep static analysis of the Lovable codebase. We ensure:

Scalability: Is the data fetching logic efficient, or will it incur massive Supabase costs as users grow?

Maintainability: Is the code structured so a future developer can actually fix a bug, or has "context rot" made it a legacy mess?

Performance: Are there "infinite recursion" errors in database policies slowing the UX?

Automated Compliance: GDPR and the Rule Builder

Data privacy is a massive hurdle. Quality Clouds includes a pre-built GDPR Ruleset specifically for low-code apps. This ruleset automatically flags PII being stored in local storage or missing encryption.

Furthermore, our Rule Builder allows IT teams to scaffold "Safe Zones" for Lovable developers. You can define corporate standards — such as "All AI calls must go through an Edge Function" — and Quality Clouds will enforce that rule across every app your business users build. No engineer required.

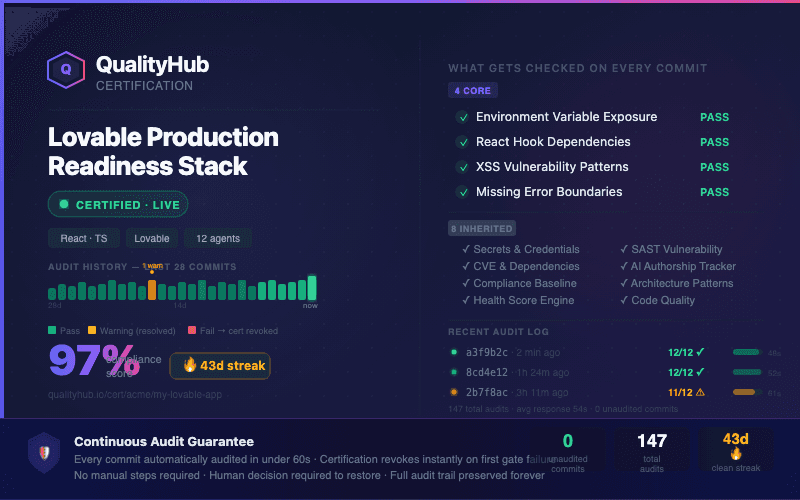

Continuous Audit: The Quality Clouds Certification

The goal of IT governance shouldn't be to "stop" development, but to "certify" it. By integrating Quality Clouds into the Lovable workflow, every commit is automatically audited against your organisation's AI code governance standards.

Quality Clouds provides a Continuous Audit Guarantee, breaking the review down into:

Core Checks: Immediate indicators for environment variable exposure, XSS patterns, and missing error boundaries.

Inherited Risks: Ongoing monitoring for CVEs (including CVE-2025-48757) and AI authorship tracker patterns.

The Health Score: A "Compliance Score" and a "Clean Streak" counter. This gamifies quality for your business users while giving IT a high-level view of the organisation's risk posture.

The Lovable Code Review Checklist for IT Teams

Before certifying any Lovable app for production use, validate every item below:

Row Level Security (RLS) enabled on all Supabase tables

No hardcoded API keys or service role credentials in client-side code

Authentication guard logic verified (no reversed allow/deny logic)

All third-party API calls routed through Edge Functions

PII fields encrypted at rest; no PII stored in localStorage

Dependency versions pinned (no floating ^ versions on security-critical packages)

Component files under 500 lines (no "context rot")

React Hook dependencies declared correctly (no memory leak patterns)

GDPR "Delete My Data" workflow present if handling end-user data

CVE monitoring active — including CVE-2025-48757 pattern checks

Closing the Loop: The AI Remediation Prompt

The biggest friction point in IT review is the "Fix Request." Usually, IT sends a 20-page PDF of errors to a business user, who has no idea how to fix the code.

Quality Clouds solves this by speaking the same language as Lovable. For every issue found by LiveCheckAI, we generate a remediation prompt that the user can paste directly back into Lovable:

Quality Clouds identifies a high-risk issue (e.g., "Missing auth.uid() check in RLS policy")

We generate a specific Lovable prompt: "Rewrite the RLS policy for the 'profiles' table to ensure users can only update rows where the ID matches auth.uid()."

The user pastes it into Lovable — the AI fixes the code instantly.

The result: a seamless Review → Prompt → Fix loop that lets business users maintain their speed while meeting the most stringent IT security requirements.

Conclusion: Enable, Don't Block

The vibe-coding revolution is here to stay. IT teams that try to block these tools will only encourage Shadow IT. The alternative is to provide a paved path: allow the use of Lovable, but require a Quality Clouds certification before any app reaches production.

By combining Aikido's penetration testing with Quality Clouds' Lovable code review and health check, you ensure that every app in your ecosystem is not just functional — but secure, scalable, and enterprise-grade. This is AI code governance in practice: not blocking development, but certifying it.

The goal is simple: Production-Ready AI Code. Quality Clouds is how you get there.

Ready to run your first Lovable health check?

Quality Clouds integrates directly into your Lovable workflow — no engineers required

Frequently Asked Questions: Lovable Code Review

Albert Franquesa

Co-Founder & CSO, Quality Clouds

Related articles

Stay ahead of the curve

Adobe

AI Code Governance

Event & Insights

Adobe Commerce in the Agentic Era: Less Code, More Control

Albert Franquesa

5 min read

Adobe Summit 2026 changed everything. AI is now the primary author of Adobe Commerce code. Learn why AI Code Governance is the new competitive edge

AI Code Governance

ServiceNow

Event & Insights

ServiceNow Just Made App Governance Free. Here Is Why That Is Good News for Code Governance.

Albert Franquesa

5 min read

Learn what AEMC actually governs, what it does not, and why that gap makes AI Code Governance more urgent than ever.

AI-Native Platforms

Agentic AI

Salesforce Headless 360: 60 Agents. Zero Native Governance

Albert Franquesa

7 min read

Salesforce just handed 60 MCP tools to coding agents with live production write access.